Pickleball Vision: CV-Driven Match Analytics

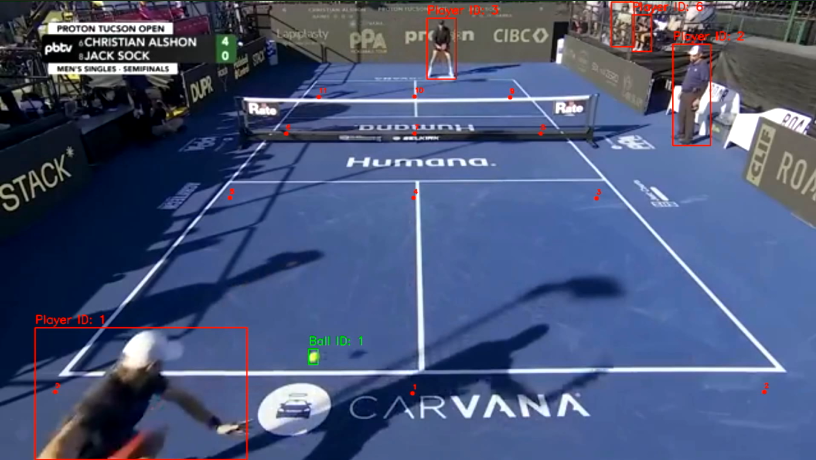

A computer-vision pipeline that turns a fixed-camera pickleball clip into an annotated video with player tracking, ball tracking, court geometry, top-down minimap, and per-frame analytics — ball speed, player speed/distance, shot count. YOLOv8 + a fine-tuned ResNet50 court keypoint regressor + iterative homography refinement.

In plain English

Tennis broadcasts have shot tracking. Major League Baseball has Statcast. Pickleball, the fastest-growing sport in the US, has nothing — match footage is just video, with no automated stats overlaid.

This project takes a fixed-camera video of a pickleball match and turns it into an annotated broadcast with player tracking, ball tracking, court geometry, a top-down minimap, ball speed in mph, per-player movement distance in feet, and shot count. All of it is computed automatically from raw video — no sensors, no manually placed cameras, no Hawk-Eye-style installation. Just whatever phone or DSLR is filming the match.

The pieces are well-known computer vision tools assembled carefully: YOLOv8 detects players and the ball; a fine-tuned ResNet50 finds the court’s lines and corners; the corners give a homography (the math that converts between “pixels in the video” and “feet on the actual court”). Once you have that homography, every other measurement — speed, distance, minimap position — is just geometry.

The hard part isn’t the detection. It’s making the court geometry trustworthy under realistic camera angles, occlusion from players, and varying lighting. Without that, the speeds are made up. So most of the engineering is in the iterative homography refinement — the part that makes every “23 mph” number on the scoreboard true.

What’s in the output

- Player boxes — only the players actually on court, filtered from raw YOLO

persondetections (spectators dropped via court-geometry containment + minimum-track-length thresholding) - Ball box + trail — smoothed and gap-interpolated trajectory with a fading trail

- Court keypoints — 12-point grid (4 horizontal lines × 3 columns) regressed by a fine-tuned ResNet50

- Minimap (top-right) — top-down 20×44 ft court showing each player’s foot position and the ball location, projected via homography

- Scoreboard (top-left) — running shot count, current and max ball speed (mph), per-player speed and total distance (ft)

- Shot markers — flash on screen when the ball is struck (velocity reversal near a player)

Court keypoint accuracy is the hard problem

A trained ResNet keypoint regressor can be 30–100 px off on unfamiliar camera angles. Player tracking is easy; getting the homography right is what makes every downstream metric (speed, distance, minimap projection) trustworthy. The pipeline applies multiple refinement strategies in priority order:

- Manual override — if

input_videos/keypoints.jsonexists, use it directly. Most accurate option for fixed-camera shots. - 4-boundary detection — locate the 2 baselines + 2 sidelines (the strongest court features) by clustering Hough segments and picking the extreme y/x clusters. Inner lines (NVZ, net) are deliberately ignored to avoid the common “snap to net” failure mode. The 4 corners → homography → all 12 canonical keypoints.

- Model-prior snapping — fall back to per-row line snapping driven by the ResNet’s prediction.

- Iterative pixel-level optimization — runs after either (2) or (3): back-project every white-line pixel into court-feet, assign each to its nearest grid line, refit, recompute the homography. Typically converges to ~0.2 ft mean residual.

Auto-labelled training data

To grow the keypoint training set without hand-labelling:

python tools/generate_training_data.py --stride 6 --max-frames 60Extracts every Nth frame, runs the 4-boundary detector + iterative refinement, and saves frame JPGs + LabelMe-format JSONs that match the existing dataset format so they can be merged and used to retrain the ResNet for better generalization. Frames where boundary detection fails (heavy player occlusion) are skipped, not given a bad label — generating bad labels would silently degrade the next training round.

Architecture

trackers/

player_tracker.py YOLO + court-aware filtering

ball_tracker.py anchor-based linker (high-conf seeds, forward/backward

propagation with per-frame max-step constraint)

motion_ball.py frame-diff fallback for frames YOLO misses

court_line_detector/

court_line_detector.py ResNet50 -> 12 keypoints (24 floats)

refine.py Hough-line snapping + 4-corner homography refit

mini_court/

court_geometry.py canonical keypoint -> feet, homography

mini_court.py top-down renderer

analytics/

shot_detector.py velocity-reversal-near-player heuristic

speed.py per-player and ball speeds in mph (via homography)Improvements over the original

- Higher inference resolution:

imgsz=1280(up from YOLO default 640) gives ~100% ball detection vs ~60%. First run is slow because YOLO runs every frame; detections cached totracker_stubs/. Subsequent runs skip inference unless--no-cache. - Anchor-based ball linker: replaced naive nearest-neighbor frame-to-frame association with high-confidence detection seeding + forward/backward propagation under a per-frame max-step constraint (the ball cannot teleport). Halves the ID-switch rate on noisy chunks.

- Court-aware player filter: drops

persondetections that fall outside the court polygon and tracks shorter than min-frames threshold. Spectators no longer pollute the metrics in stadium footage. - Iterative homography refit: the white-line pixel back-projection loop. Previously fixed at the model’s first-pass prediction; now self-corrects to ~0.2 ft mean residual.

- Pure-Python test suite: 20 unit tests covering geometry, smoothing, shot detection, speed math — run without torch / YOLO weights, finish in well under a second. Catches regressions in the analytics pieces without paying for full inference.

Stack

- Python 3.10+,

ultralytics(YOLOv8),pytorch,opencv-python,numpy,pandas - Trained ball detector (

models/yolo5_last.pt) on hand-labelled pickleball footage - Trained court keypoint model (

models/keypoints_model.pth) — ResNet50 backbone + regression head

What it demonstrates

- Multi-stage CV pipeline where each stage is testable in isolation

- Homography-driven measurement: every ft / mph number is geometry, not guesswork

- Auto-labelling loop that knows when to refuse to label

- Caching strategy that makes iterative work tractable on a single machine