LSTM-Driven Poker Analytics & Bluff Prediction Platform

Developed an end-to-end machine learning pipeline that extracts, cleans, and feature-engineers over 5.7k real-money hand histories from PokerNow.club. Leveraging advanced feature engineering (bet ratios, decision times, board evaluations with Ace detection, and dynamic positional metrics) and a custom LSTM model with dynamic bucketing, the system predicts bluff versus value betting with a test AUC of 0.77. Hyperparameter tuning, cross-validation, and class balancing were key to optimizing performance.

In plain English

When someone makes a big bet in poker, they’re either bluffing (their hand is weak and they want you to fold) or value-betting (their hand is strong and they want you to call). Telling the difference is the entire game. Skilled players use timing, bet sizing, board texture, and their opponent’s history of plays to make educated guesses.

This project asks: can a neural network learn to tell the difference, given the same information a human player has? I scraped over 7,000 real-money hands from PokerNow.club (a popular online play-money and small-stakes site, blinds from $0.25/$0.50 to $2/$5), engineered features that capture how each hand played out — bet sizes relative to the pot, decision times (a human takes longer when the decision is close), board texture (paired? flush-draw? Ace on board?), positional context — and trained an LSTM (a type of recurrent neural network designed for variable-length sequences) to predict, at the moment a player makes a big bet, whether it’s a bluff or a value bet.

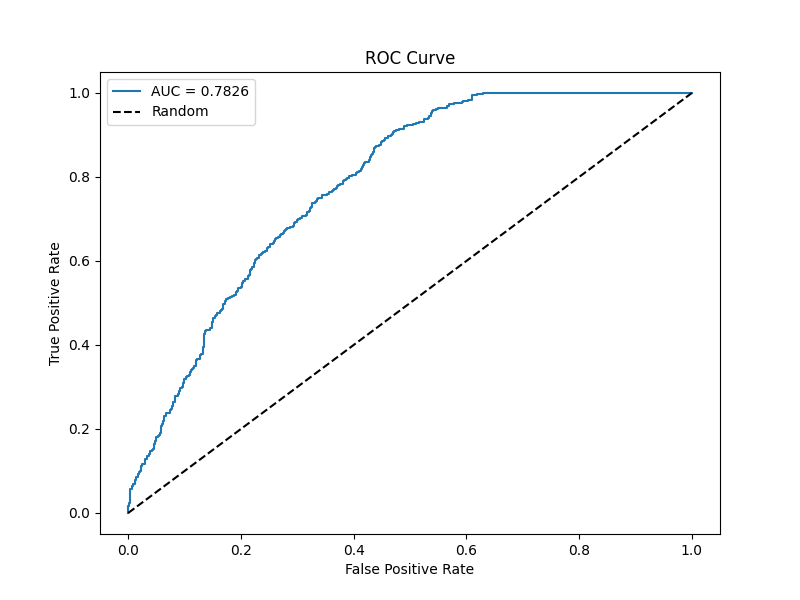

Final test AUC: 0.77, meaning the model correctly distinguishes bluffs from value bets 77% of the time on hands it has never seen. The interesting part isn’t just the number — it’s which features the model relies on, which gives a quantitative picture of what tells human players are actually leaking at low-to-mid stakes.

Technical introduction

The system processes over 7,000 hands (with blinds from $0.25/$0.50 up to $2/$5) to engineer advanced features—such as bet ratios, log-transformed decision times, comprehensive board evaluations with Ace detection, and dynamic positional metrics. A custom LSTM model, utilizing dynamic bucketing to manage variable-length sequences, was developed to predict whether the villain’s betting action is a bluff or a value bet, achieving a test AUC of 0.77.

Output

Below is a screenshot from the model evaluation dashboard displaying the confusion matrix, ROC curve, and feature importance chart:

Models & Techniques Used

- LSTM Network with Dynamic Bucketing: Processes variable-length sequences of poker actions.

- Bidirectional LSTM Layers: Capture context from both the past and future actions.

- Advanced Feature Engineering: Incorporates bet ratios, decision times (log-transformed), board evaluations (with Ace detection), and positional metrics.

- Cross-Validation & Class Balancing: Ensures robust model performance despite class imbalance (52% bluffs).

Training

- Data Preprocessing: Raw hand histories are cleansed, features are engineered, and sequences are built per hand. Numerical features are standardized and categorical features are one-hot encoded.

- LSTM Model Training: The model is trained using a combination of Bidirectional LSTMs, dropout, batch normalization, and L1/L2 regularization. Training is optimized via early stopping and learning rate reduction with cross-validation.

- Dynamic Bucketing: Instead of padding all sequences to a global maximum, hands are bucketed by similar sequence lengths to reduce wasted computation and improve training efficiency.

Requirements

- Python 3.8+

- TensorFlow 2.x

- Pandas, NumPy, Scikit-Learn

- Matplotlib, Seaborn (for visualization)